-

-

Notifications

You must be signed in to change notification settings - Fork 3.5k

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Better LiDAR intensity measurements #3826

Comments

|

Hello @jeanlucdeziel-leddartech, |

|

Hi @DSantosO, Thank you for the interest. Yes, the point cloud projection is done on the client side, using OpenCV (specifically, the cv2.projectPoints function). I project the point cloud in the intensity image, resulting in a poisition in 2D pixel coordinates for each 3D point. Then, I assign the intensity at those coordinates to each point. Because I have to round the pixel coordinates, there is a loss of information there, so I have to use a very high resolution intensity sensor to alleviate this problem (typically, 5-10x the resolution of the lidar at minimum). I tried doing interpolation instead to capture some sub-pixel accuracy, but it was slower than simply increasing the resolution. Another note, I've been able to make this run real time, but only because the way I obtain the 3D point cloud, all detections are always at exactly the same direction vector. I'm not using the new lidar sensor (from 0.9.10) because I worked on that prior to the recent lidar improvement and I needed better 3D geometry. Thus, I'm using a depth image sensor to generate the 3D point cloud that always have exactly the same projection that I have to compute only once. I tried the new version, but sadly, this trick doesn't work anymore, because the direction vector for each detection is not constant and having to perform the projection each frame makes it too slow. Of course, if a proper solution is to be implemented in Carla, no projection should be necessary. The ComputeIntensity method of the RayCastLidar sensor should directly have access to the roughness, shininess, etc. and compute something similar to a Phong shading (which is what I tried to mimic with my Unreal shader). I you guys have trouble with the phong shading stuff, I can help. If you need more information, I will gladly share my code with you. |

|

Hello @jeanlucdeziel-leddartech, Hello @AzziNothA, |

Hello @AzziNothA , I'm also very interested about your work. Please contact me shenhaoquan on espace. We are in the same company and with the same research interest. |

|

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs. Thank you for your contributions. |

|

Hi! Is there any updates on this feature request? Thanks |

|

This issue has been automatically marked as stale because it has not had recent activity. It will be closed if no further activity occurs. Thank you for your contributions. |

|

Hi! We got inspired by this and wrote a paper about it here. I did a rough implementation which is very slow but at least works. If the devs are interested, we could implement this officially in the engine. |

|

@fgoudreault Great work, the paper looks really interesting! Will you publish the code/ your CARLA build anywhere or maybe submit a PR? |

|

Hey @Tottowich! Thanks for the feedback :) Here's the link to the paper website where you can have the link towards the code. It is not public at the moment but soon will be! I'd gladly submit a PR but I don't think my code would be efficient enough to be suitable in production environments (mostly python code). I did modify some Carla code on the semantic lidar to make this work and introduced new post processing materials but all of these are contained in a docker image which can be downloaded from the soon-to-be-public code link in the website. If some people would like to implement this in an official release of Carla I'll gladly help :) |

Hi,

I implemented a custom made shader in Unreal to try to improve the intensity measurements of the LiDAR sensor. I though that I could share this in case someone would take inspiration from it to implement something similar in the official version.

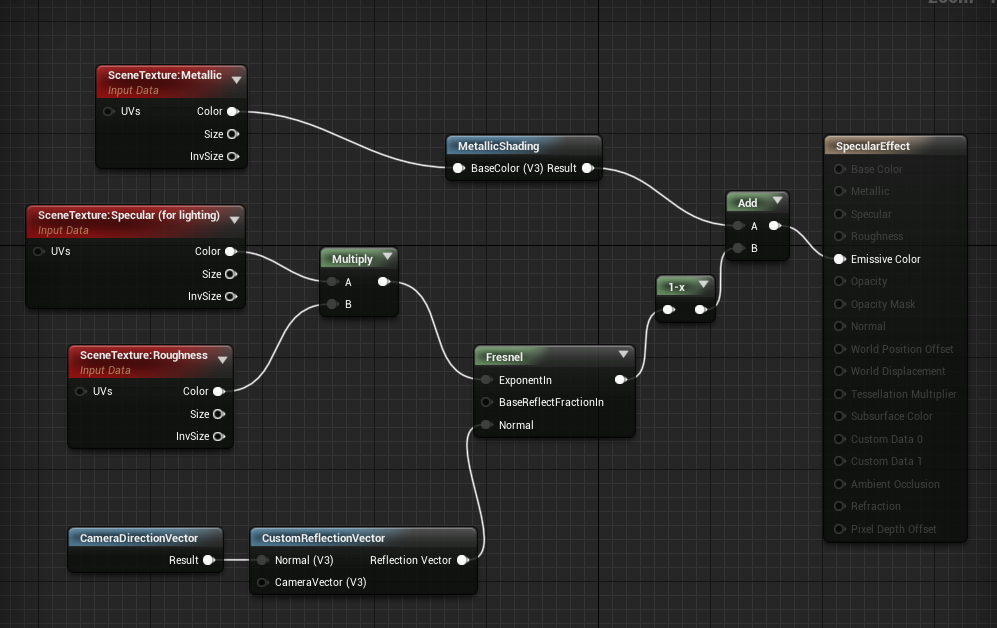

Here is the "BackScattering" shader:

The roughness, metallic and specular data from the scene texture are combined with the normals to approximate the effects of diffuse and specular reflections. The normals are with respect to the "camera", because the LiDAR emits its own light.

The shader then put the reflections from the three sources (diffuse, specular, metallic) in the three channels of a RGB image, that I combine in post-processing to get the intensity level. See the example below for a building.

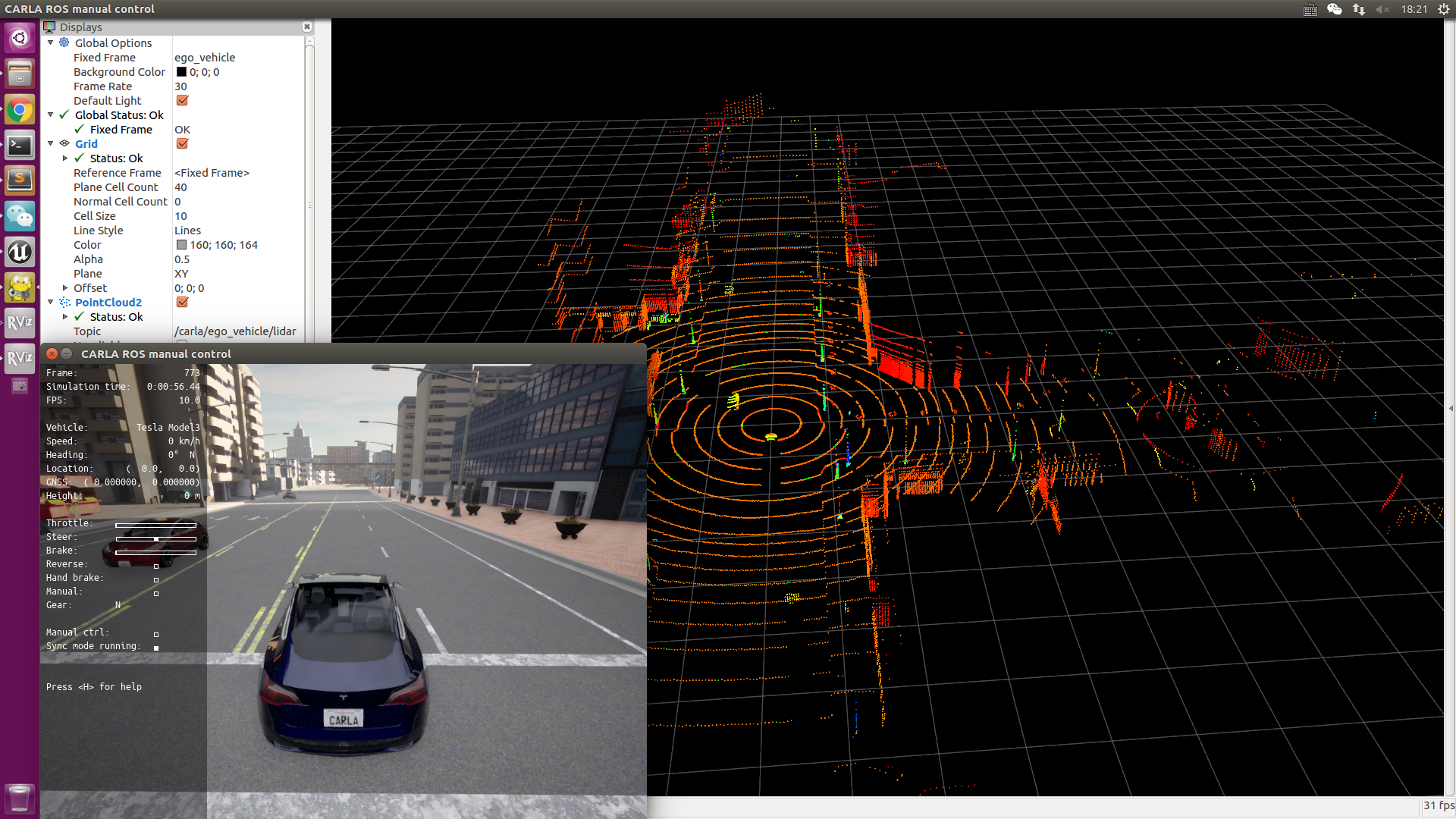

I've been able to implement a camera sensor that uses this shader and I do a point cloud projection to get the intensity for each LiDAR point. However, it would be much better to have a LiDAR sensor that directly uses this shader. But I could not figure out how to do this. See an example of the results below (intensity is colored in log scale)

The text was updated successfully, but these errors were encountered: